“Stop watching me.”

That’s an actual message a user typed into a search bar, captured during session monitoring. They weren’t talking to customer support. They were talking to the algorithm.

It sounds absurd until you realize how common this is. When users believe a human is behind your personalization system, attributing consciousness to your automated algorithms, everything changes. Their behavior becomes erratic. Your conversions tank. And nobody talks about it.

The Ghost in the Machine

According to a recent Pew study, most US adults don’t even realize their daily digital experiences are personalized with AI. This gap in understanding creates something fascinating: folk theories.

Users invent explanations for things they don’t understand. Remember when Microsoft unveiled Recall, that feature that tracks everything from web browsing to voice chats? Within days, forums exploded with posts like “Microsoft Copilot is Spying on Me.”

Not “Microsoft’s algorithm is collecting data.”

“Microsoft is spying on me.”

That single word “spying” reveals everything. Users don’t see code. They see intent. They see a person.

The Three Theories Users Live By

Through session monitoring, we see three dominant beliefs:

The Eavesdropper Theory: “My phone is listening to my conversations.” Every marketer has heard this one. User talks about dog food, sees dog food ad, becomes convinced their device is eavesdropping.

The Stalker Theory: “Someone is watching my every click.” Users modify their behavior, browse “decoy” content, try to throw off their imagined watcher.

The Mind Reader Theory: “It knows things I never told it.” The most unsettling—when personalization gets so accurate it feels like telepathy.

These aren’t edge cases. These beliefs drive real behavior that destroys real metrics.

When Users Perform for the “Watcher”

Here’s what session replay reveals: When users believe they’re being watched by a human, they put on a show.

They’ll browse “respectable” content before visiting what they really want. Add items to cart they have no intention of buying. Search for random products to “confuse the system.” One user spent ten minutes clicking every category on an e-commerce site in rapid succession, apparently trying to overload their observer.

Some try to communicate. They type messages in search bars: “show me better deals if you’re watching” or “I know you can see this.” They leave notes in feedback forms addressed to their imaginary observer. They negotiate with the void.

Others rebel. They deliberately click irrelevant recommendations. Clear cookies obsessively. Create false patterns. It’s digital protest against an oppressor that doesn’t exist.

The Business Cost of Digital Paranoia

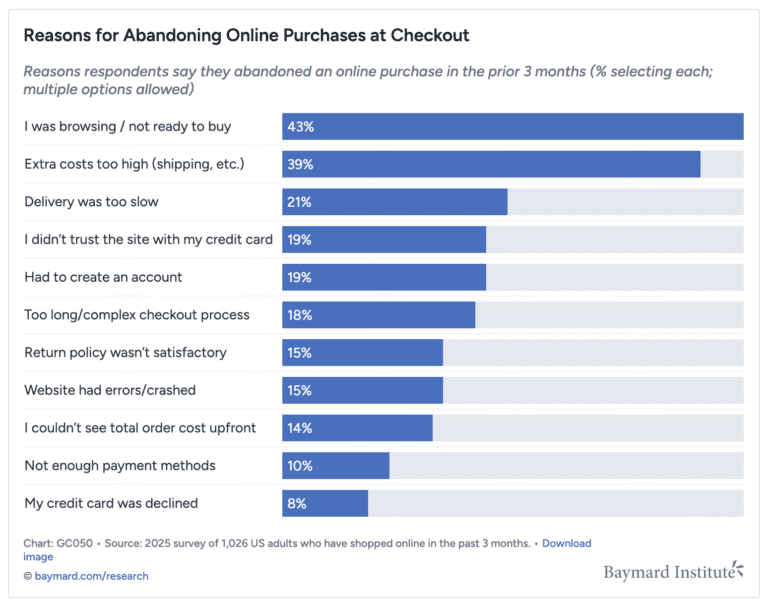

Nearly half (45%) of customers abandon brands that repeatedly show the same advertisement, according to MarketingWeek. But that’s just repetition. When users think you’re watching them? The damage multiplies.

Session monitoring shows the pattern clearly:

- First, they check privacy settings

- Then they test the system with fake searches

- Finally, they either adapt (perform) or abandon (leave)

The ones who stay aren’t really present. They’re performing a version of themselves they think you want to see. Their data becomes worthless. Your personalization, trained on false signals, gets worse. It’s a death spiral that starts with a misunderstanding.

The Uncomfortable Truth About “Creepy”

We love to talk about the “creepy valley” of personalization, that point where helpful becomes harmful. But we’re missing something crucial: 87% of customers are willing to share personal information for personalized experiences. Among Gen Z, it’s 94%.

People want personalization. They just don’t want to feel watched.

The difference matters. When Netflix recommends a movie because you watched similar films, that’s an algorithm. When it feels like someone at Netflix is monitoring your viewing habits at 2 AM, that’s surveillance.

Same data. Same algorithm. Completely different user experience.

Breaking the Illusion

The solution isn’t less personalization. It’s killing the ghost in the machine.

Show the machinery: Spotify nails this with “Because you listened to…” It’s not mysterious. It’s mechanical. Users see cause and effect, not consciousness.

Use robot language: “Our system automatically suggests” beats “We recommend for you.” One implies code. The other implies people.

Delay responses: Instant reactions feel human. A two-second processing animation reminds users they’re dealing with a machine.

Respect complexity: Users aren’t one person. They’re professionals on LinkedIn, creators on Instagram, shoppers on Amazon. When your algorithm can’t keep up with human complexity, it feels like judgment. “The algorithm thinks I’m X” becomes “They think I’m X.”

What Session Monitoring Actually Reveals

Here’s what we should be watching for:

- Messages typed in search bars that aren’t searches

- Privacy page visits within the first five clicks

- Erratic navigation patterns (the digital equivalent of looking over your shoulder)

- Sudden logouts after personalized recommendations

- The phrase “how did you know” in any user feedback

These aren’t bugs. They’re features of a system that’s accidentally convinced users it’s human.

The Path Forward

As the technology gets better, the problem gets worse. LLMs make it harder to distinguish human from machine. Every advancement in AI makes anthropomorphism more likely, not less.

But here’s the thing: Users don’t need to understand machine learning. They need to understand that there’s no human behind the curtain. No one is watching. No one is judging. It’s just math responding to clicks.

The most sophisticated personalization system in the world fails if users think someone’s watching them through it. Because when users believe your algorithm is human, they stop being themselves. And personalization without authentic behavior is just expensive guesswork.

Monitor for anthropomorphism. Design against it. Because the goal isn’t to seem human, it’s to be helpful. And you can’t be helpful to someone who’s performing for an audience that doesn’t exist.