On September 25, 2025, the U.S. Federal Trade Commission announced a $2.5 billion settlement with Amazon—the largest consumer protection penalty in FTC history. The charge? Deceptive design patterns that made Prime enrollment trivially easy while making cancellation deliberately difficult.

This wasn’t a slap on the wrist for questionable marketing. This was a quarter-of-your-annual-profit penalty for interface design choices.

Let that sink in: specific UX decisions—button placements, flow structures, copy choices—just became a $2.5 billion liability.

If you’re a product designer, UX lead, or growth PM who’s ever A/B tested your way into a “conversion win” by making the cancel button harder to find, adding friction to the opt-out flow, or burying the “no thanks” option in confusing copy—you’re now designing in a fundamentally different risk environment.

Welcome to the era where dark patterns aren’t just ethically questionable. They’re financially material. UX designer Harry Brignull coined the term ‘dark patterns’ in 2010 and brought widespread attention to manipulative design techniques.

The Amazon settlement demonstrates that using dark patterns exposes companies to serious legal penalties and legal risks, including regulatory fines and sanctions. Beyond regulatory action, there is a significant cost to companies—not just in financial penalties, but also in lasting damage to brand reputation, user trust, and long-term customer relationships.

Introduction to Dark Patterns

Dark patterns are manipulative design techniques embedded within user interfaces that intentionally trick or steer users into making choices they might not otherwise make. These deceptive tactics are widespread across today’s digital platforms, from e-commerce sites to social media apps and many subscription services. The core aim of dark patterns is to influence user behavior—often by nudging, pressuring, or confusing users—so they take actions that primarily benefit the business, such as automatically enrolling in paid plans, sharing more data, or making unintended purchases.

You’ll find dark patterns in everything from a user’s shopping cart that sneakily adds extra items, to pop-ups that use guilt-inducing language to discourage users from opting out, to fine print that hides hidden costs or traps users in recurring payments. These manipulative techniques can take many forms: misleading visual cues, confusing language, or requiring users to click through multiple pages just to cancel a subscription.

As digital services become more central to daily life, understanding how dark patterns affect user autonomy is crucial. By learning to spot these deceptive practices, users can better protect themselves from being pushed or tricked into decisions that aren’t in their best interest. For businesses and UX designers, the growing awareness and regulation of dark patterns signal a clear need to prioritize user centric design and ethical alternatives—because in today’s digital landscape, manipulative design is no longer just a bad look; it’s a significant legal and financial risk.

The Amazon Settlement: A Line-Item Breakdown of Dark Patterns in UX Decisions

What makes the Amazon case particularly significant isn’t just the dollar amount. It’s the specificity.

The FTC didn’t allege vague “consumer harm” or abstract “unfair practices.” They detailed exact interface elements: the number of clicks required to cancel versus subscribe, the use of confusing language in cancellation flows (specifically, the use of misleading language as a tactic), the strategic deployment of interruption screens designed to discourage completion of the cancellation process and manipulate users.

In other words, the settlement reads like a UX audit. And the price tag makes it clear: these weren’t just “aggressive growth tactics” or “conversion optimization best practices.” They were deceptive trade practices with enforceable consequences.

The FTC’s complaint specifically called out Amazon’s cancellation process—internally codenamed “Iliad” after Homer’s epic—for being intentionally labyrinthine, employing deceptive design tactics in the cancellation flow. The implication was unavoidable: if your internal team is naming the cancellation flow after a multi-year odyssey of struggle, you probably know exactly what you’re building.

Europe Is Naming Names—and Naming Patterns Under the General Data Protection Regulation

If you thought this was a uniquely American enforcement moment, the European Union has news for you.

Recent enforcement actions under the Digital Services Act have explicitly cited “dark patterns” in findings against Meta and TikTok. The EU isn’t just fining companies for general violations of user rights—they’re identifying specific interface design techniques and labeling them as DSA breaches. The General Data Protection Regulation (GDPR) also serves as a key legal framework, safeguarding user privacy and requiring transparency in digital interactions.

This matters because it establishes something crucial: regulatory language is catching up to design language. When enforcers can name the pattern, they can regulate it. When they can regulate it, they can price it. The question of whether dark patterns are illegal in the EU is still evolving, as ongoing legal challenges and enforcement actions continue to shape the boundaries of what is permissible.

“Dark pattern” is no longer industry jargon or academic terminology. It’s enforcement vocabulary. Regulatory documents also refer to these manipulative practices as “deceptive patterns,” highlighting their ethical and legal implications. And once a practice has a name in regulatory documents, that practice has a measurable risk profile in today’s digital landscape.

The Accountability Layer for User Autonomy That Didn’t Exist Before

Here’s what’s changing in practical terms: UX design is about to get an audit trail.

In traditional financial controls, every significant decision leaves a paper trail. Who approved this expenditure? What was the justification? What alternatives were considered? When regulations tighten or investigations begin, companies can demonstrate due diligence—or they can’t.

UX has largely operated without that accountability layer. Decisions about button copy, flow structure, and interface hierarchy have lived in design files and Slack threads, not formal decision logs. There’s rarely documentation of intent, consideration of alternatives, or explicit sign-off on choices that might disadvantage users. Ethical design practices are now essential to foster accountability and ensure that user interests are prioritized.

That era is ending.

As conversion design becomes a compliance function in addition to a growth function, expect the infrastructure to follow:

Decision logs: Documentation of why specific interface choices were made, what alternatives were considered, and who approved potentially high-friction patterns, with a responsibility to protect users from manipulative design.

Cancellation-path instrumentation: Detailed tracking not just of who cancels, but specifically where in the cancellation flow they succeed or abandon—because if users are dropping out halfway through your cancellation process, that’s now evidence of obstruction, not just a funnel metric.

Reviewable interface intent: Clear, auditable records of what each screen, button, and flow is designed to accomplish—and whether that intent aligns with user interest or primarily serves company interest. These processes are critical for maintaining user trust and demonstrating transparency.

If this sounds like overkill, remember: Amazon just paid $2.5 billion because they couldn’t convincingly argue their cancellation flow was designed with user clarity in mind.

‘Growth Wins’ That Don’t Survive Scrutiny Are Actually Liabilities

Here’s the mental model shift every product org needs to make: that A/B test winner that reduced cancellations by 15% might not be an asset—it might be a future liability sitting on your balance sheet.

If the mechanism of that 15% win was clarity and improved communication, you’re fine. If the mechanism was confusion, friction, or misdirection—even if users technically “could” still cancel if they really wanted to—you’ve potentially created something that won’t survive regulatory scrutiny. Some conversion optimization tactics may benefit the company at the user’s expense, undermining trust and exposing the business to risk.

And the precedent being set is clear: “users could technically do it if they tried hard enough” is not a defense. The FTC’s Amazon case explicitly addresses the difficulty of cancellation, not the theoretical possibility of it.

This means growth teams need to start asking a new question before shipping that conversion-boosting experiment: “How would we defend this design choice in a deposition?” Balancing business goals with user interests is essential to ensure that design strategies are both effective and defensible.

If the answer involves phrases like “strategic friction,” “decision fatigue,” or “cognitive load management” applied to user-unfavorable actions, you might want to reconsider. Manipulative tactics not only risk regulatory action but also negatively impact user satisfaction, eroding trust and long-term loyalty.

What ‘Auditability’ Actually Looks Like in Practice

For teams wondering how to operationalize this shift, here’s what moving toward auditable UX design might look like:

1. Document design intent explicitly

Every material interface decision—especially those affecting subscription, cancellation, data sharing, or consent—should have a written rationale. Not just “this performed better in testing,” but “we chose this because it provides clarity on X while allowing users to easily Y.” It is essential to provide users with clear information and control over their actions to ensure transparency and trust.

2. Instrument reciprocal actions symmetrically

If you’re tracking sign-up funnel performance with 47 analytics events, you should be tracking cancellation funnel performance with equal granularity. Asymmetric instrumentation reveals asymmetric intent—and makes it very hard to claim you were “optimizing for user experience” rather than “optimizing for retention at user expense.”

3. Create explicit escalation criteria for high-friction patterns

Establish clear guidelines: If a design choice adds more than X clicks to a user-favorable action (like canceling) compared to a company-favorable action (like subscribing), it requires director-level approval and documented business justification. Make friction visible to people who understand legal risk, not just people who understand conversion metrics.

4. Test discoverability, not just completion

It’s not enough that users can cancel if they manage to find the right sequence of actions. Can they easily cancel? Test this by bringing in users who’ve never seen your interface and asking them to complete the cancellation flow with no guidance. If they struggle, you have a problem—and increasingly, you have legal exposure.

When handling data sharing and consent, prioritize ethical data practices by ensuring transparency in data collection and respecting user privacy. Consent interfaces should be designed to obtain explicit consent from users, making sure they are fully informed and in control of their choices.

The Evolution From ‘Growth Hacking’ to ‘Growth Due Diligence’

For the past decade, “growth hacking” has been a celebrated term in product circles—a badge of honor for teams that found creative, unconventional ways to drive user acquisition and retention.

But many growth hacks were actually dark patterns—specifically, dark UX patterns—with better marketing. These deceptive design strategies are used in user interfaces to manipulate users into unintended actions. The difference between “clever conversion optimization” and “deceptive interface design” often came down to whether you were willing to look at it from the user’s perspective or exclusively from the company’s, and whether you prioritized ethical design as the preferred approach.

The Amazon settlement marks an inflection point: growth tactics that rely on user confusion, friction asymmetry, or exploitation of cognitive biases to nudge users toward specific actions are no longer just ethically questionable—they’re priced liabilities.

Smart organizations are already making the shift from “growth hacking” to what we might call “growth due diligence”—the practice of pursuing conversion optimization within constraints that will survive regulatory scrutiny and public disclosure.

This doesn’t mean abandoning persuasion or optimization. It means abandoning deception and obstruction as tools in the optimization toolkit.

What Changes in 2026

If the Amazon case and EU enforcement actions are the warning, here’s what the response will look like as we move through 2026:

Legal review of UX decisions becomes standard practice

Expect subscription flows, cancellation processes, and consent interfaces to go through legal review the same way terms of service and privacy policies do now. Design won’t just answer to product anymore—it will answer to compliance.

Third-party UX audits become a thing

Just as companies hire external auditors for financial controls, expect a market to emerge for independent UX auditors who can certify that your interfaces meet non-deceptive design standards. Ensuring user friendly interfaces will be crucial for meeting compliance requirements and building trust. This will be especially important for companies pursuing IPOs or M&A, where undisclosed interface liability could affect valuations.

Insurance pricing starts accounting for interface risk

Cyber liability insurance already prices in data security practices. Expect D&O insurance and general liability policies to start asking questions about your cancellation flows, consent mechanisms, and interface design processes. High-risk patterns will carry higher premiums.

Competitive advantage shifts to transparency

Early adopters of clearly auditable, user-favorable interface design will be able to market it as a trust signal. Companies that avoid dark patterns and prioritize transparency can differentiate themselves in the market. “We make it as easy to cancel as it is to subscribe” becomes not just good ethics but good positioning—especially as competitors deal with enforcement actions. Transparent and ethical practices not only build trust but also enhance user experience, fostering long-term loyalty.

The Uncomfortable Truth

Here’s what many product teams don’t want to hear: some of your best-performing experiments probably won’t survive this new regulatory environment.

That test that reduced churn by 12% by making the “Confirm Cancellation” button less prominent? Liability.

That flow redesign that boosted conversions by 23% by moving the “No Thanks” option to a second modal that appears after users click “Maybe Later”? Liability.

That copy change that decreased opt-outs by 31% by replacing “Don’t share my data” with “Learn about privacy settings”? Liability.

The metrics looked great. The business impact was measurable. But the mechanism was deception, and deception—even highly effective, carefully A/B tested deception—is now a regulatory target with billion-dollar consequences.

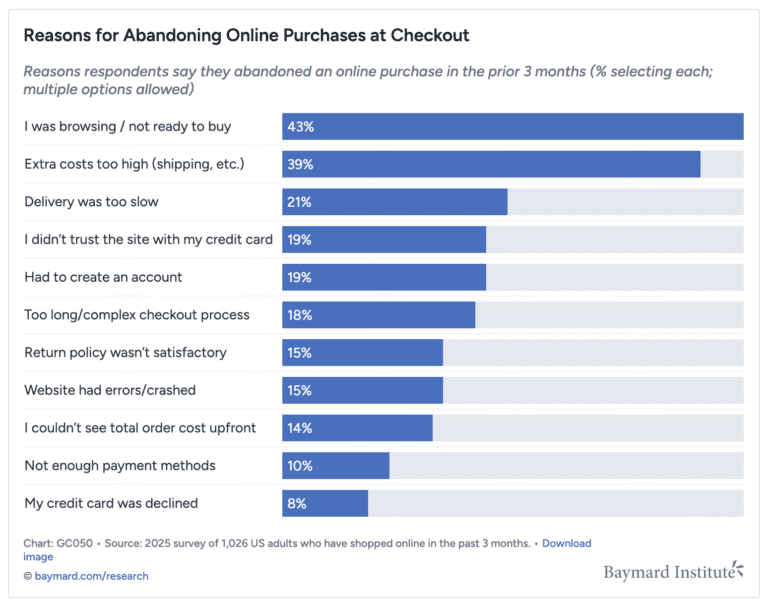

Many dark patterns are commonly used in digital products, including automatically enrolling users in subscriptions without explicit consent, trapping users in unwanted agreements by making cancellation difficult, and adding items to the user’s shopping cart without their knowledge. Other tactics include hidden fees that only appear at checkout, tricking users with misleading discounts or disguised ads, and using confirmshaming language that implies users will save money if they don’t opt out. Some patterns pressure users or push users into making quick decisions by creating a false sense of urgency or scarcity, often through fake countdowns or limited-time offers. These manipulative designs can result in greater data collection for companies, sometimes at the expense of user autonomy and privacy.

Social proof is also frequently manipulated, with marketing teams generating fake reviews or ratings to influence user trust and decisions. People with low digital literacy, such as seniors or those with cognitive impairments, are especially vulnerable to these tactics. Additionally, some dark patterns work by leading users through confusing flows or ambiguous language to achieve company goals, rather than supporting clear and informed choices.

The Path Forward

The good news: designing for clarity, symmetry, and user agency doesn’t have to mean sacrificing business performance. It means getting better at persuasion through value proposition rather than persuasion through confusion.

Companies that invest now in building auditable, user-favorable interfaces won’t just avoid regulatory risk. They’ll also foster customer trust and long-term customer loyalty—because when your retention strategy requires that users understand their options and still choose to stay, you’re forced to make your product actually worth staying for.

That’s a harder design challenge than adding friction to the cancel button. But it’s the only one that doesn’t come with a potential nine-figure settlement attached.

The Amazon case isn’t an anomaly. It’s a signal. Dark patterns just got a price tag, and for most companies, it’s a price they can’t afford to pay.

In 2026, the question won’t be “Did this test win?” It will be “Can this win survive scrutiny?”

Start designing accordingly.